WebXR retro computer simulation

You can build your childhood dream in immersive 3D and share it with the world in a website! To me, that is amazing.

You can build your childhood dream in immersive 3D and share it with the world in a website! To me, that is amazing.

This is a story of how I fell in love with Three.js & WebXR, the value of creative side-projects, and the people who helped me along the way in creating xr.bbcmic.ro.

Background

My first computer

The ‘80s were simpler times. Your computer only knew what you told it about yourself. You switched it on and it instantly greeted you with a prompt. No in-app purchases, no tracking, no ads. That honesty and immediacy is something tech should still aspire to.

Back then, few adults had used a computer before - so kids were on the forefront. There’s a whole generation of innovators in the UK who were inspired by this formative experience with a BBC Micro or ZX Spectrum at school or home.

How it started

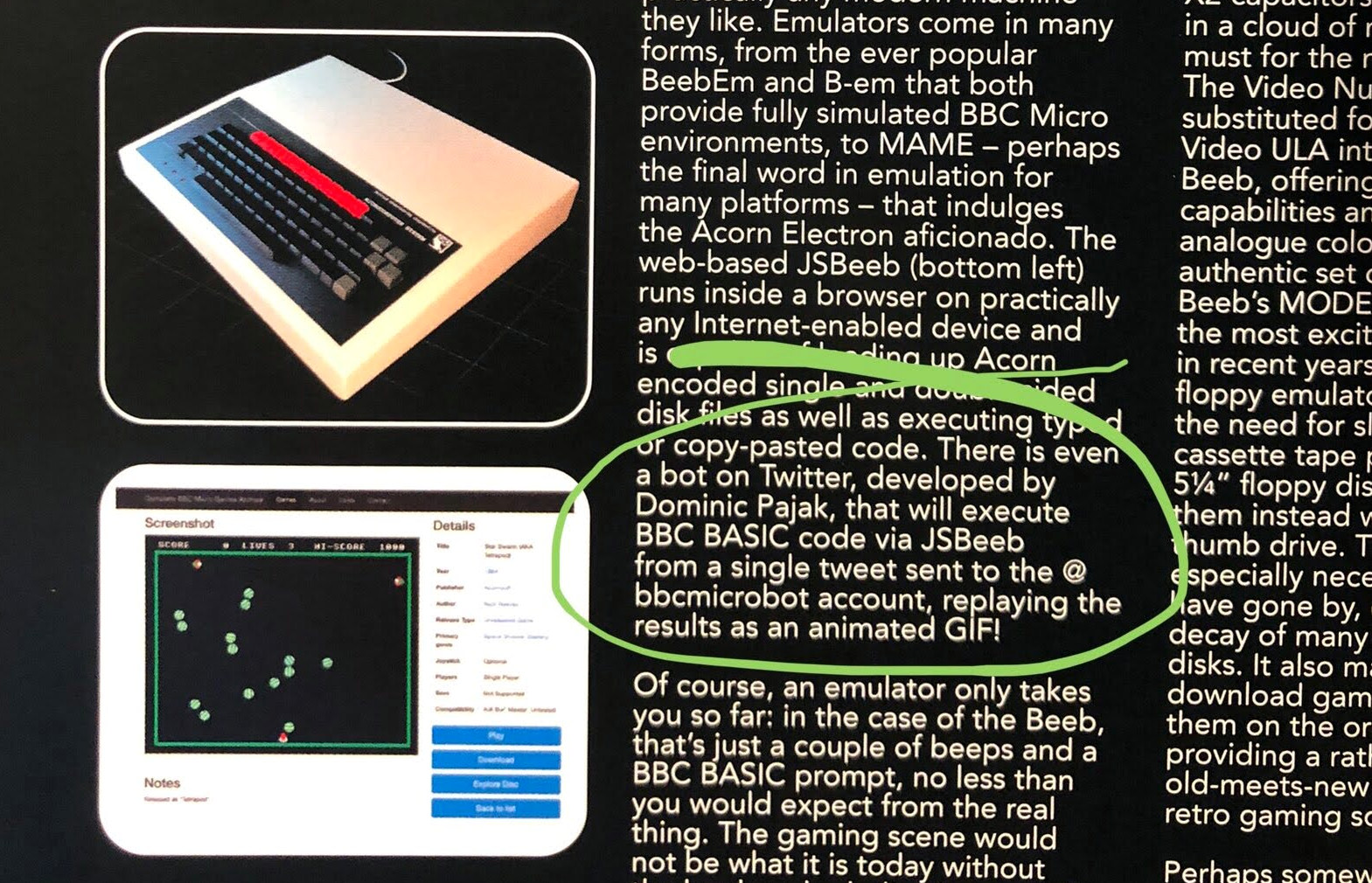

One unexpected result of the COVID-19 pandemic was a boom in retrocomputing. Prices of yellowing plastic electronic junk surged on eBay. People stuck at home found an escape in nostalgia for those simpler times - and a new online community. Although it would take me until 2024 to actually get around to finish, the xr.bbcmic.ro project was born of these circumstances. I saw a Tweet from fellow beeb fan David Groom saying that I was mentioned in a book. Yep, I'd finally made it. There was a one sentence mention of me lurking near the back. On the same page was a render of a BBC Micro:

The book mentioned BBC Micro Bot - another pandemic era side-project I built in 2020, using Matt Godbolt’s groundbreaking emulator-in-a-browser, JSbeeb. That’s a whole other story. I guess what’s relevant here is it meant I knew how JSbeeb worked.

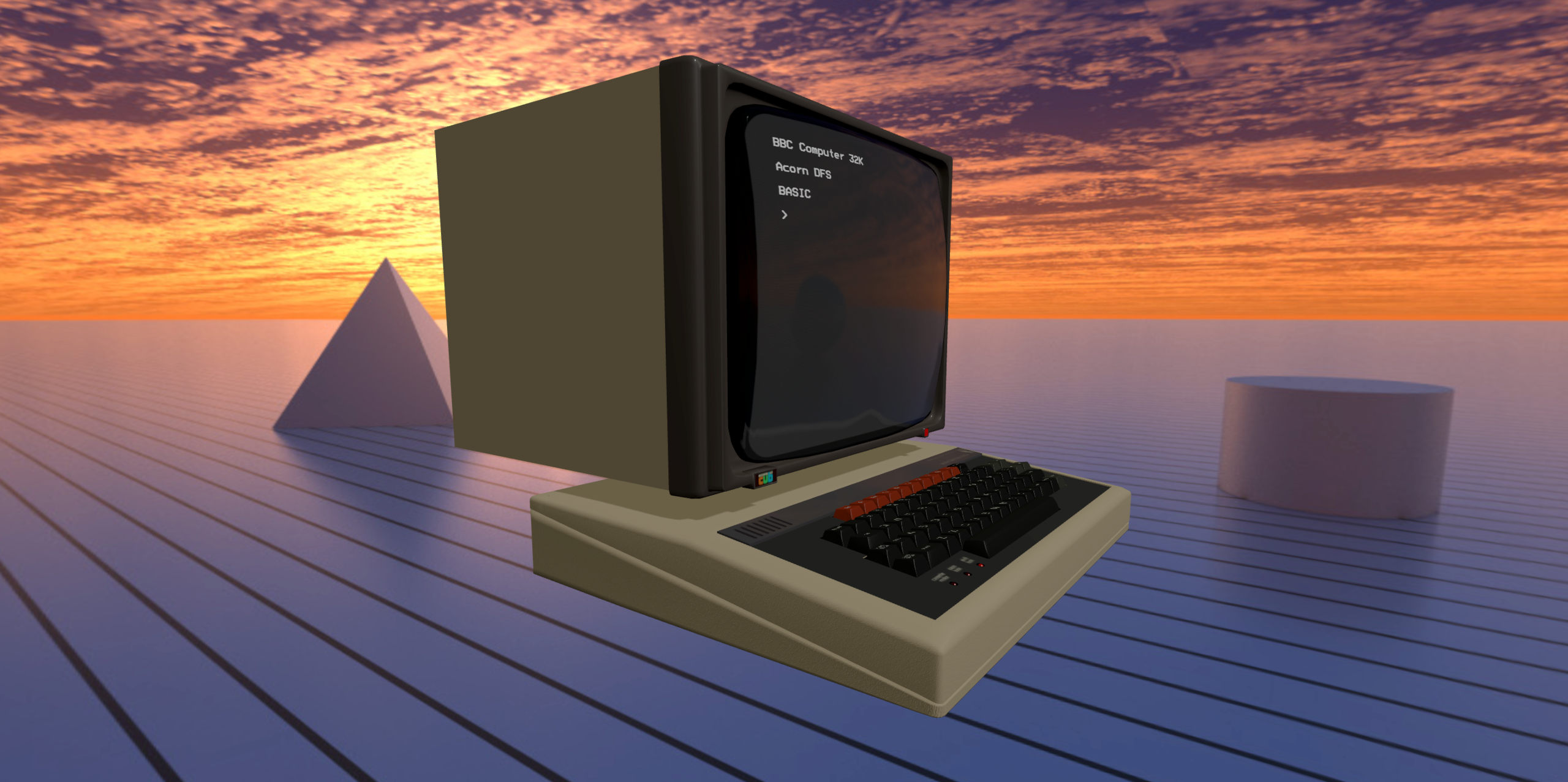

Seeing the BBC Micro render in the book, it struck me - I could bring that 3D model to life! Although it's only rendered as a 2D plane, JSbeeb’s screen is actually a WebGL texture. So I could put the two together with Three.js and have a full interactive 3D simulation of the machine in a browser! It wouldn’t even take long to hack together - let’s go!

It was approaching the 40th Anniversary of the BBC Micro; just enough time to build a free 3D web simulation for web (later dubbed Virtual Beeb) and get it out for the anniversary in December 2021 as a tribute to our favorite computer.

3D Model

Blender model

I contacted artist Ant Mercer to get permission to use the excellent Blender model he’d created for IDESINE’s book Acorn: A World In Pixels so I could export it as a GLTF and into a Three.js webpage.

I then built a proof of concept with the JSbeeb emulator live updating the texture of the screen of the BBC Micro model in less than a day - it looked a little rough, but it was magic!

BBC Micro Bot had earned me an honorary membership of Bitshifters from another source of inspiration, Kieran Connell - most of the crew are veteran games developers, and so their feedback and advice on the Virtul Beeb prototype was gold. The glimmer of coolness I’d built was enough to inspire my open-source emulator hero and Compiler Explorer creator Matt Godbolt to volunteer to help out. Computer graphics guru Paul Malin also lent his shader skills and expert eye to the project.

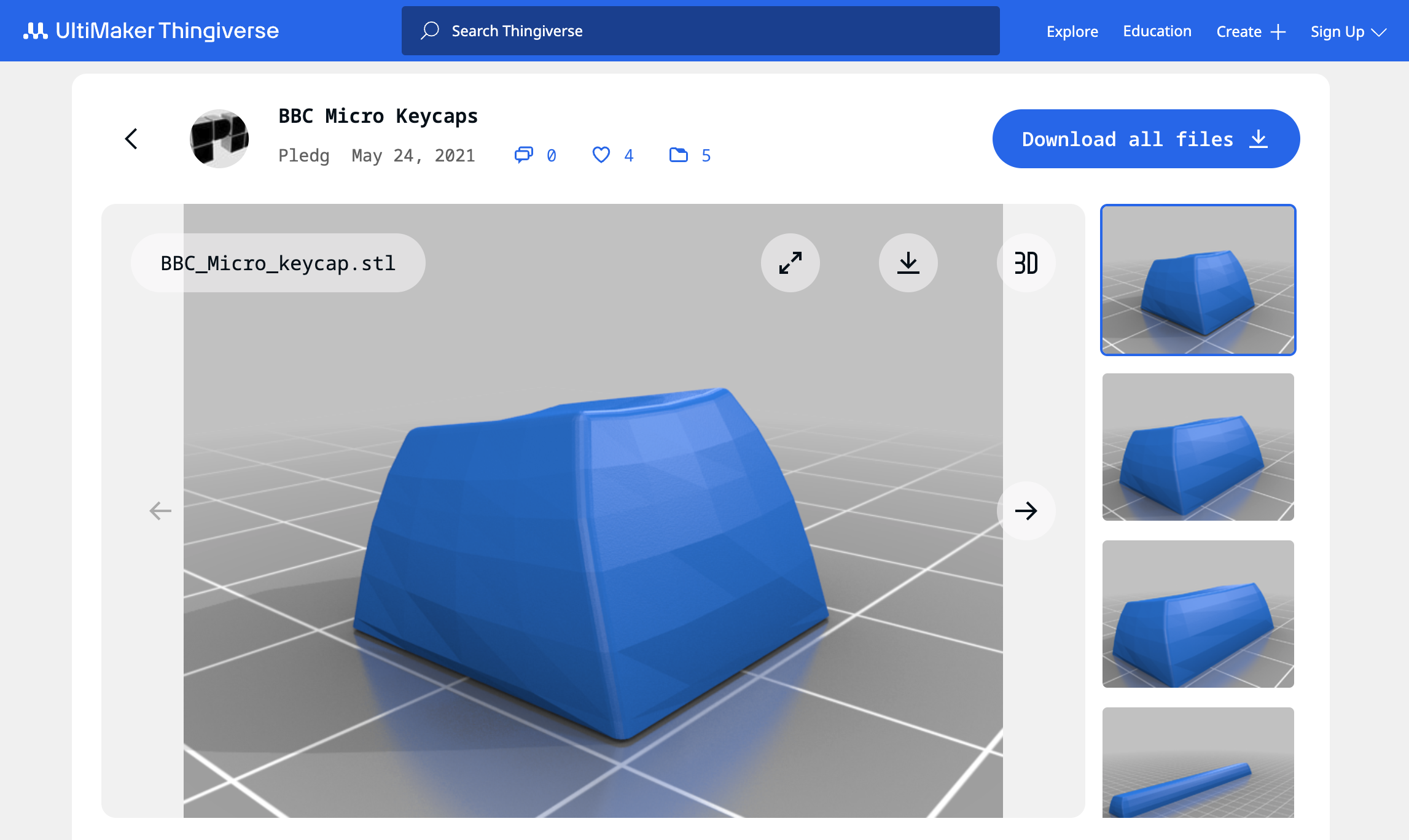

Keycap STLs

The original Blender model was initally used without much modification - except for some additional textures, material tweaks (for GLTF compatibility) and polygon reduction. But I eventally found more accurate spherical keycap models by Paul Ledger on Thingverse (intended for 3D printed replacements of real keys!), and implemented them as an instanced mesh to save on draw calls to optimize for WebXR.

Sound design

Samples

The BBC Micro’s mechanical keyboard gave the machine an iconic look - and sound. I recorded samples of key presses of each size of key at varying velocities. Matt did a whole load of work hooking things together including (vitally) the keyboard and pointer interaction for Virtual Beeb. Most satisfyingly, the user could drag their pointer across the mechanical keys and you’d hear a sound like a cat pawing around on it. The clacky mechanical keyboard sounded great!

I've since seen claims all 74 keys were sampled individually; but even I'm not that obessive! (Although close) There were 18 samples in total. The samples consisted of a 1U key (i.e. regular key size), a ~2U key (Shifts, Tab and Return), and an 8U key (Space bar). Each was sampled with upstroke and downstroke sounds separately, at 3 different velocities to avoid an unnaturally repetitious ta-ta-ta in the simulation and add some realism.

Positional Audio

For the WebXR port I implemented the same 18 audio samples, but this time used Three.js Positional Audio.

For the WebXR port I implemented the same 18 audio samples, but this time used Three.js Positional Audio.

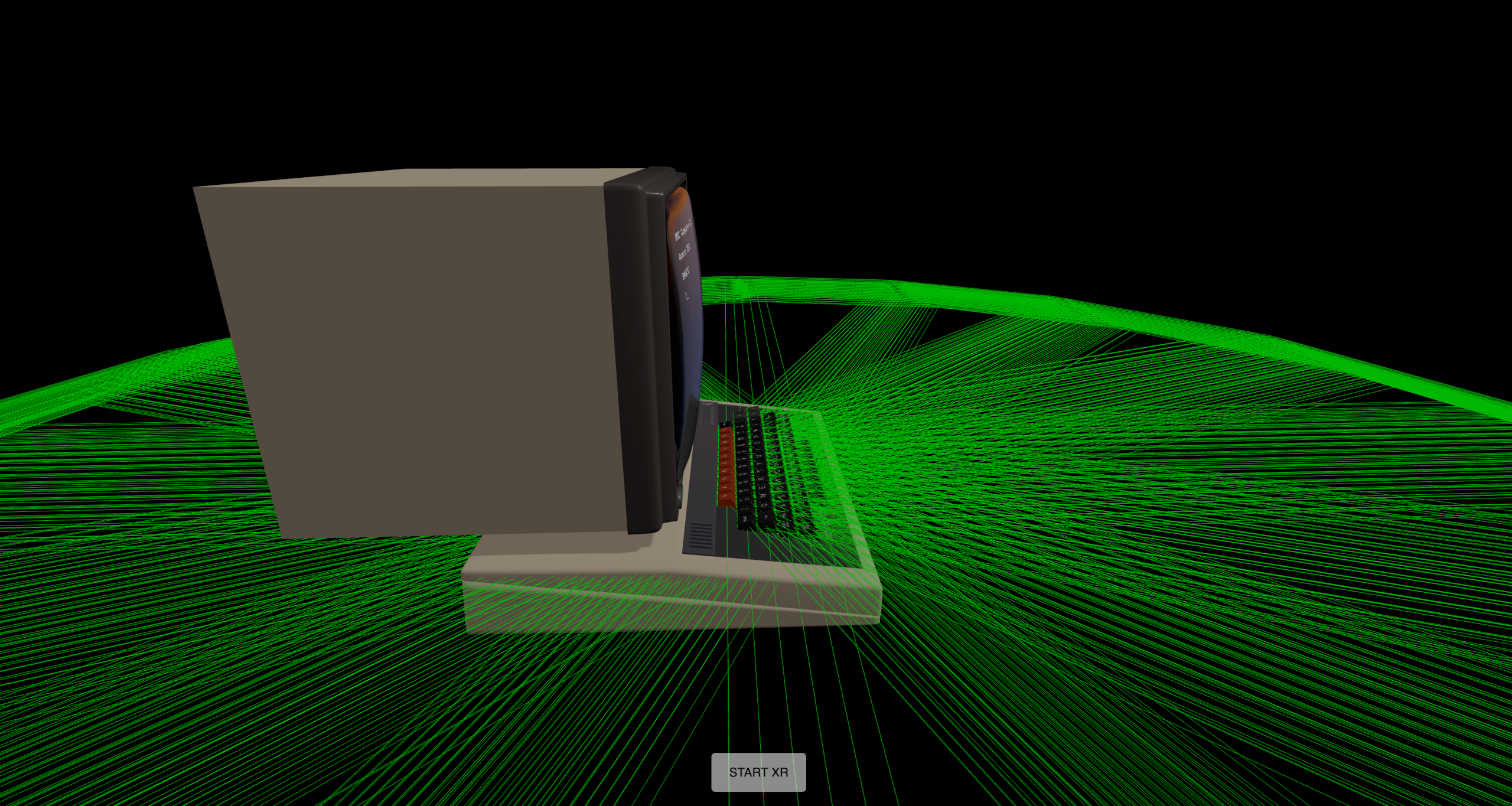

Each of the 74 keys does have a positional audio object attached, so when it's pressed the sample is triggered at a unique position immersive 3D space. You can see these positional audio helpers in green wireframe in the image (this was only visible during development).

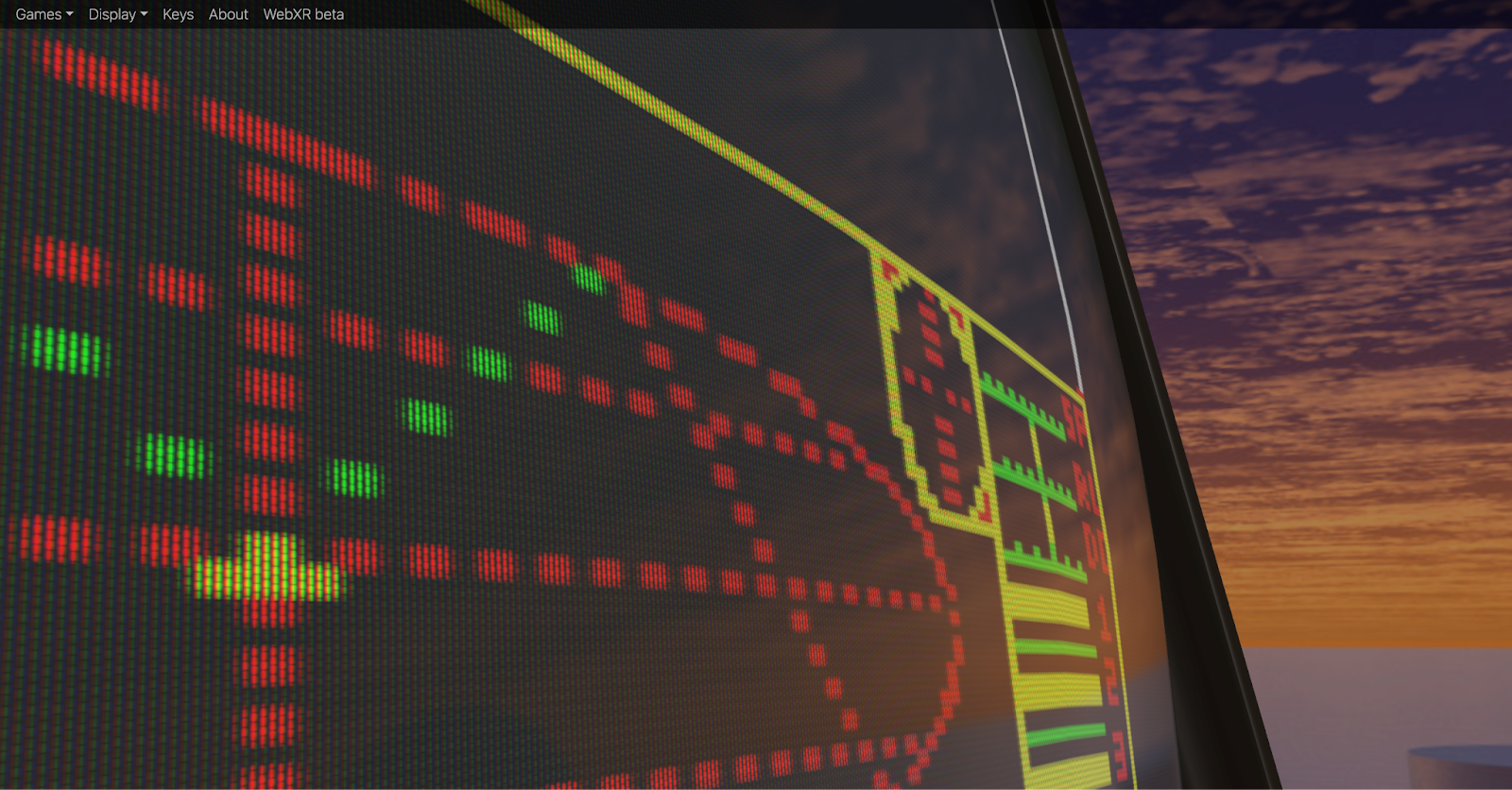

Shaders

One of the most visually impressive aspect was the CRT shader created by Paul Malin in GLSL - you can zoom in and see the phosphor dots and rippling noise in the virtual CRT screen! The realism of the CRT shader is still the best I’ve seen in any model.

One of the most visually impressive aspect was the CRT shader created by Paul Malin in GLSL - you can zoom in and see the phosphor dots and rippling noise in the virtual CRT screen! The realism of the CRT shader is still the best I’ve seen in any model.

The background was equally cool. Instead of some ‘80s style room, the machine was in a retro futuristic landscape under a flaming sunset, just like in the Acorn adverts at the time. The feeling of using the machine back then was futuristic - that’s the feeling I was trying to capture in Virtual Beeb - and Paul’s shader really made it work.

First launch

Having got the help of two world-class developers in Matt and Paul, we were able to crunch out a release in a matter of weeks. Not only was it fully working, it looked stunning! It got huge traction online and fittingly also featured on BBC Television. The (non-VR) Virtual Beeb is still online at virtual.bbcmic.ro - it’s still getting around 5K users per month.

WebXR prototype

The reaction to Virtual Beeb was great, the project was a success, and over. But one response to the announcement caught my eye:

It was my favourite 3D web library Three.js suggesting I add VR capability to the project!

I had an old Oculus DK2 from backing the Elite Dangerous kickstater, but this inspired me to get a new Meta Quest 2 for Christmas. In January I added the VR button, and hey presto, it really did 'just work'! The magic of Three.js meant I only needed to add a couple of lines to give the project immersive VR support.

It was amazing to be able to walk inside the project in VR. I was actually standing in Paul Malin's retrofuturistic landscape, looking at a ‘solid’ BBC Micro suspended in space. I could feel the weight of the machine and the static crackle of the CRT monitor as I put my face near it. Really, really cool.

The bad news - a whole new interaction method was needed to actually do anything with it. I’d just started a new job and the holidays were over. The side project went back on the shelf.

Hand tracking

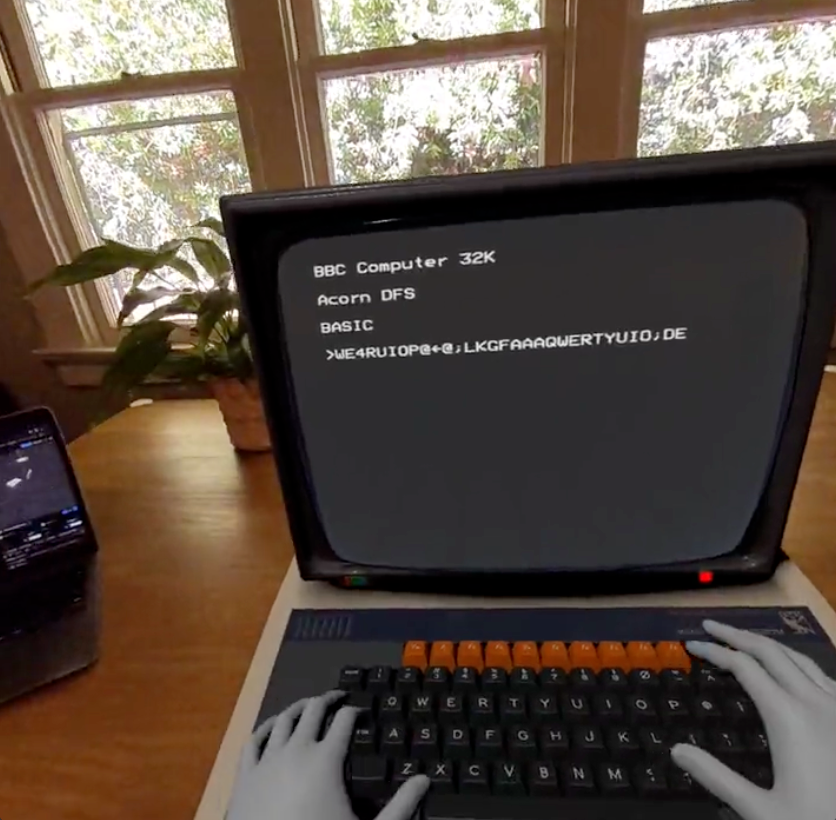

Almost a year later, I chanced upon the Three.js Hand Tracking example and realized I could quickly add support into virtual beeb and actually “type” on the keyboard. Yet again, the Three.js example and Oculus Hand Models meant I could drop similar code into my project and get a huge leap in capability:

![]()

Things were getting uncanny as I typed with my cold grey VR fingers, and it showed massive potential for a real VR experience people might actually enjoy. I posted progress up on Twitter and was surprised to get a comment from a Dave Hill, an engineering manager at Meta:

That was encouraging! But reality started to kick in when I realized how far I was from meeting Meta's WebXR content guidelines, both in terms of UI and performance...

The holidays were over, I was back to actual work. I put the project on shelf for good, I thought.

Mixed-Reality

The launch of Meta Quest 3 and excitement around the Apple Vision Pro announcement prompted me to post something cheeky on social media about them needing a killer app (also acknowledging my project had been dragging on for ages…).

To my surprise, I got a response from Mr Doob aka Ricardo Cabello - founder of Three.js - suggesting I should try the newly added Three.js XR button to enable Mixed-Reality:

I was in Spain at that point and didn’t get back for several months to try his suggestion. Once I got back, I asked a friend at Meta if I could get a discount on a newly released Quest 3 to give it a try during the holidays. By swapping in the Three.js XR Button example, my project instantly got WebXR passthru “for free”.

I can’t overstate how empowering these Three.js examples were. My project, that once was limited to a desktop browser, was now solid and sitting convincingly on the table right in front of me!! It was another wow moment for me:

Performance issues

When I'd initally ported to VR, free look was smooth and I was excited it even worked at all. But unfortunately my project was a juddery mess if the user moved position. Reducing the polygon count helped a bit, but not enough. The original Virtual Beeb project was thrown together in record time and only a cursory optimization was done when I exported the model from Blender - I was lucky that it was enough for desktop and mobile. It was not enough for VR.

I’d discovered that (in retrospect, unsuprisingly) a mobile VR port is a serious project. There are two major factors going against you; On one hand, you have a less capable processor dealing with the 2x more polygons and draw calls needed for stereoscopic vision. On the other, VR is unforgiving of low framerates and jitter. The result? My app would literally nauseate people.

Fixing it looked like a lot of work. But thanks to encouragement and feedback from Ash Nehru and Dave Hill, I now had a clear idea what 'finished' would look like. The end was in sight!

It was time to get serious.

WebXR optimization

Meta has some very good guides on performance optimization for WebXR. I applied pretty much every trick I could to squeeze under the fps limit; from simpler materials, merged meshes, fewer lights, lazy evaluation of shadows, instanced meshes, smaller textures. It was quickly apparent that the app was not GPU bound, rather CPU bound - too many draw calls with a significant load in running a full emulation of a BBC Micro behind the scenes.

Model

All those keys added to scene complexity. After some decimation and vertex clean-up in Blender, the big model optimization wins were from using an instanced mesh for 69x 1U keys and applying DRACO compression to the source GLTFs for the machine body and keycap types. This reduced the draw calls and polygon count for the model by around 4x; and the result actually looked better!

Scene complexity (Immersive XR mode doubles this):

virtual.bbcmic.ro (left)

calls: 102

triangles: 173K

xr.bbmic.ro (right)

calls: 23

triangles: 38K

Emulation Web Worker

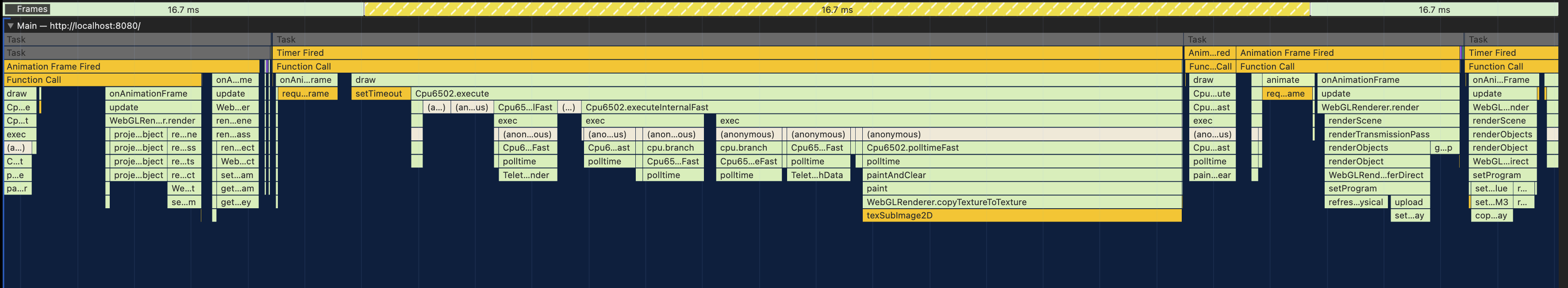

The elephant in the room (or rather, on the main thread) was a whole BBC Micro emulator making the renderer judder:

(Graph shows performance profiling on desktop Chrome with 6x throttling, targeting 16.7ms / 60 fps for illustration purposes - this was hitting ~45fps on Meta Quest 3 before optimization)

(Graph shows performance profiling on desktop Chrome with 6x throttling, targeting 16.7ms / 60 fps for illustration purposes - this was hitting ~45fps on Meta Quest 3 before optimization)

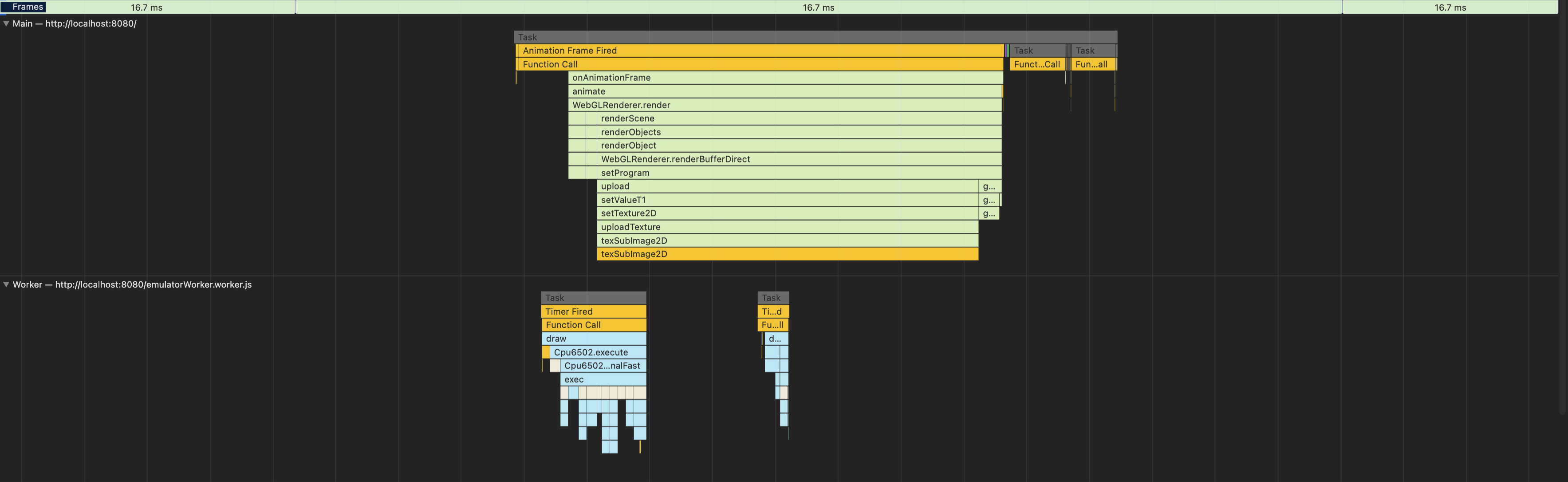

The solution lay in putting JSBeeb in a Web Worker, and using message passing to communicate key presses, sound sample buffer events, LED light changes, etc. between the emulator thread and the scene renderer on the main thread. This smoothed things out considerably, but for good measure I also set the target frame rate to 72 fps down from 90 fps.

One final optimization on was the emulator video handling. I removed frame blanking paintAndClear() and double buffering WebGLRenderer.copyTextureToTexture() from the emulator implementation. Instead, I used a SharedBufferArray so the frame buffer from the emulation Web Worker was accessible by the main thread. Every VSYNC (emulator screen refresh at 50Hz) an updated screen texture was copied to the GPU via a Three.js needsUpdate=true. I implemented conditional blanking based on writes to certain CRTC registers in the JSBeeb emulation (Rich Talbot-Watkins was to thank for that tip). This avoided flickering (given I'd done away with the double buffer) but still cleared garbage from the screen borders on emulator MODE changes when necessary, which was previously happening every VSYNC.

Result

We'd done it. xr.bbcmic.ro now ran at a smooth 72 fps on Meta Quest 3 in passthru with handtracking - although if you are very determined you can still get the fps to dip with some violent and sustained mashing of the VR keyboard (It was part of the testing!)

Apple Vision Pro support

I'd been developing this project solely with my Meta Quest 3, but the beauty of WebXR is it should work with any compatible VR headset.

I wasn't able to test it myself, so it was great to confirm xr.bbcmic.ro also worked on Apple Vision Pro (in VR mode only) without modification, courtesy of James Thomson:

Conclusion

What started as a picture in a book had become a full simulation in mixed-reality. Pretty amazing really.

My side-project had surfed a wave of progress in VR from Meta Quest making it affordable, to Three.js & WebXR support in Meta Quest 3 and Apple Vision Pro. It was a lot of fun and I learnt a ton along - I've applied Three.js professionally for IoT and robotics visualizations since! Although, I don't plan on embarking on a side project this big again for a while.

The project wouldn't have been possible without the JSBeeb emulator by Matt Godbolt, models by Ant Mercer & Paul Ledger, shaders by Paul Malin, and textures by Stew B & Adrian G. Thanks also to Ash Nehru, Dave Hill, Kieran Connell, Mark Moxon, Rich Talbot-Watkins, Tom Seddon.

If you've got a WebXR compatible browser and virtual reality headset (e.g. Meta Quest 3 or Apple Vision Pro) you can try xr.bbcmic.ro here:

Hope you've enjoyed this blog. There's a ton of interesting stuff about the implications of AI and interaction design for mixed-reality (e.g. building a virtual touch UI and efficiently detecting collisions and maintaining state for 74 keys...) that I had to learn and implement for the project that I've omitted here - that might go in a part two...

You can get in touch with me here